Generative Local Search: Optimizing for "Near Me" in ChatGPT & Perplexity

Generative Local Search is the shift from “rank in the map pack” to “become the highest-confidence local entity” across the data sources AI assistants synthesize. This guide shows how multi-location brands can defend “near me” demand as clicks move to answer engines.

Most local SEO teams already understand the broader shift to AI answers; if you want the big picture first, read What is Generative Engine Optimization (GEO)?. If you’re building a defensible knowledge graph, pair this with Entity Density in SEO and Structured Data for LLMs.

Note on scope: assistant behavior varies by engine, mode, and locale. This guide focuses on repeatable operator moves (consistency, evidence, identity hygiene) that improve “near me” performance across systems—even when the exact ranking logic is opaque.

Curiosity gaps (what changes when “near me” becomes generative)

- In our internal tests, assistants sometimes recommend a business that’s farther away when it has stronger, more consistent evidence for the user’s intent—optimizing for a defensible recommendation, not just the closest pin. 1

- Most multi-location brands grind Google Business Profile hygiene, but under-invest in the verification layers assistants use to cross-check claims (directories, map layers, and review sources).

- Review text behaves like semi-structured input: when customers mention truthful specifics (“quiet enough for calls”, “wheelchair ramp”, “same-day crown”), assistants can map that to intent and justify a recommendation.

How to test this yourself (10-minute operator protocol)

Run a weekly “near me” test so this becomes measurable—not a vibe.

- Pick 12 queries: 4 core category (“dentist near me”), 4 attribute intents (“dentist near me for nervous patients”), 2 “open now”, 2 brand+nonbrand.

- Control location: same device, location services on, same neighborhood. Record city/area.

- Run across surfaces: Google Maps + one assistant UI (Perplexity or ChatGPT mode you care about).

- Capture evidence: screenshot or copy answer + citations, recommended entities, “confidence language” (hedging).

- Score weekly:

- Inclusion rate: % queries where your location appears.

- Top-3 mention rate: % queries where you’re in the top set.

- Attribute match rate: % attribute queries where you’re recommended for the right reason.

- Citation presence: % answers that cite a relevant profile/review page about you.

This gives your team a backlog you can defend.

Traditional Local SEO vs. Generative Local Search (AI)

| Signal | Traditional Local SEO (Maps) | Generative Local Search (AI) |

|---|---|---|

| Distance | Explicit factor (“distance”); highly visible in map results | Often treated as a constraint (“reasonable distance”), then intent fit decides within bounds |

| Relevance | Category/services + query match | Attribute extraction from review text + entity descriptions drives intent fit |

| Prominence/Trust | Prominence signals + citation/consistency + broader authority | Entity confidence: cross-source agreement + low ambiguity reduces hedging/omission |

| Reviews | Rating + volume + recency | Text specifics become evidence; assistants can paraphrase attributes |

| Structured data | Helpful for the web, indirect impact on maps | Identity grounding layer when it matches external profiles |

Why “proximity is king” is getting weaker (not gone)

In Google local, rankings are shaped by relevance, distance, and prominence—and distance remains a first-class factor. 2 That reality trained a whole industry to obsess over grids and centroid hacks.

Generative Local Search changes the operator problem: assistants frequently try to answer “best match nearby” rather than “closest eligible listing.” For attribute-heavy queries, the assistant needs justification it can stand behind—so it often prefers the business with clearer, cross-source evidence for the specific intent.

So the better claim is:

- “Near me” is increasingly shorthand for reasonable distance + intent match, where evidence richness and consistency can outweigh small distance differences.

The practical takeaway: you can’t control distance, but you can control certainty.

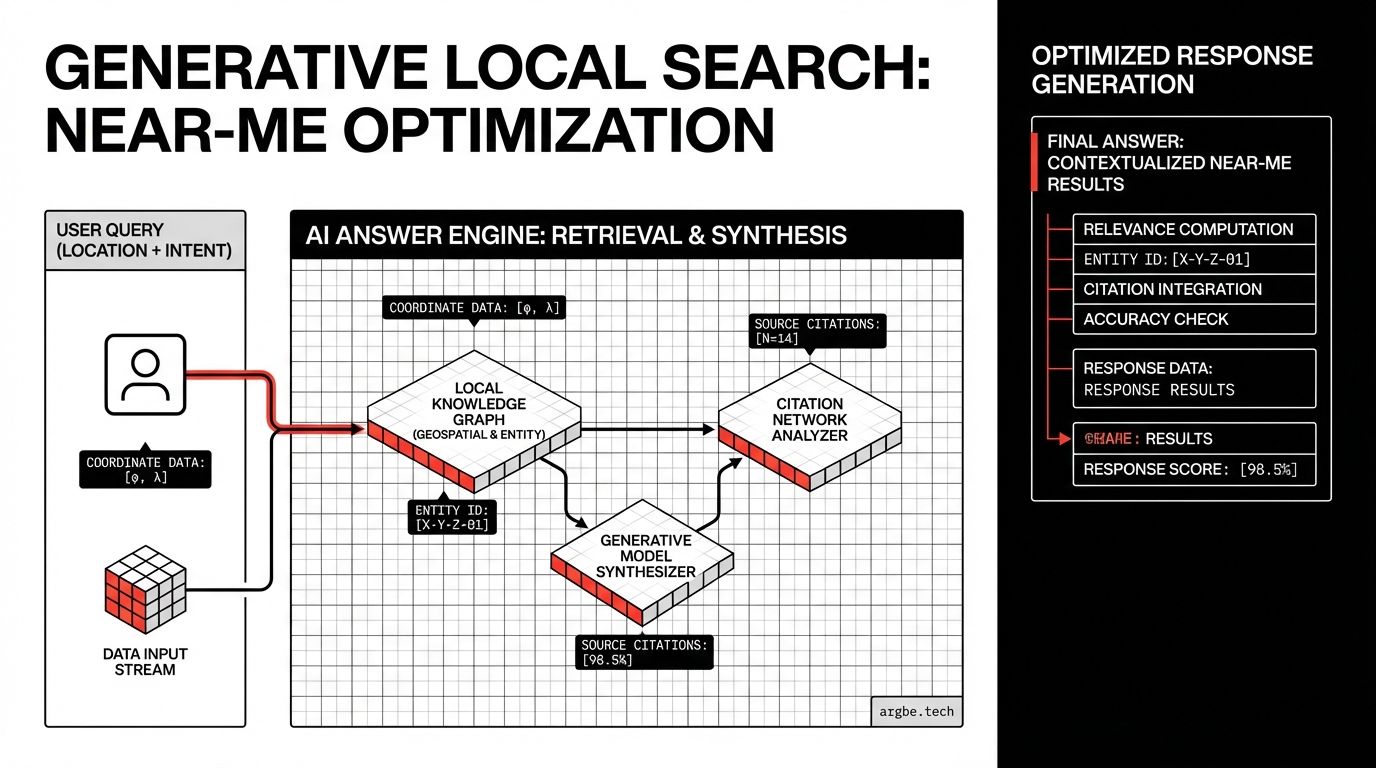

The AI Local Stack (a sanity model)

Assistants synthesize from multiple layers; they don’t behave like one crawler.

- Your site = narrative (what you claim)

- Profiles & directories = verification rails (what others corroborate)

- Map layers = geometry + POI graph (where you are; what you’re near)

- Reviews = attribute evidence (what customers repeatedly describe)

When these layers conflict, assistants often reduce specificity, hedge, or skip you—because “wrong recommendation” is worse than “no recommendation.”

Optimizing review text (without policy risk)

Star ratings still matter—but mostly as a coarse filter. The bigger shift is that assistants can read review text and treat it like semi-structured data: a stream of attributes tied to user intent.

When a user asks “best dentist near me for nervous patients,” assistants look for repeated, concrete signals like:

- “gentle,” “explained everything,” “didn’t rush”

- “sedation options,” “same-day crown”

- “took my insurance,” “wheelchair accessible”

A policy-safe review prompt pattern

You don’t script reviews. You prompt for truthful detail.

Ask one optional follow-up question after a standard “how was your visit?” message, e.g.:

- Coffee: “Was it quiet enough to take a call?”

- Dentist: “If you were anxious, what helped?”

- Salon: “Did they run on time?”

Guardrails (non-negotiable):

- No incentives for positive reviews.

- No gating (don’t ask only happy customers).

- Don’t ask for a rating; ask for a truthful detail.

For multi-location brands, a lightweight “attribute menu” works:

- Each location selects 5–8 attributes it reliably delivers (quiet mornings, gluten-free options, same-day appointments, accessible entrance).

- Your prompt rotates one attribute question to encourage specificity over fluff.

Done right, review text becomes durable evidence: hard to fake at scale, valuable across engines, and easy for assistants to justify.

Structured data for physical entities (identity > marketing)

Reviews create evidence; structured data creates identity.

Schema.org helps when it matches what the ecosystem already believes. If your markup says one thing and profiles say another, you create conflict—and assistants become cautious.

Minimum viable certainty payload (per location page)

For each location page, publish a LocalBusiness payload with:

@id(stable, unique per location)name(location-specific)address(PostalAddress)telephonegeo(GeoCoordinates)hasMapopeningHoursSpecificationsameAs(only stable, verified profile URLs)

Schema reference: LocalBusiness supports these fields and related types. 3

Template: multi-location LocalBusiness JSON-LD

{

"@context": "https://schema.org",

"@type": "LocalBusiness",

"@id": "https://example.com/locations/example-city-center#localbusiness",

"name": "Example Business — City Center",

"url": "https://example.com/locations/example-city-center",

"telephone": "REPLACE_ME_WITH_E164_PHONE",

"address": {

"@type": "PostalAddress",

"streetAddress": "123 Example Street",

"addressLocality": "Example City",

"addressRegion": "EX",

"postalCode": "00000",

"addressCountry": "US"

},

"geo": {

"@type": "GeoCoordinates",

"latitude": 0,

"longitude": 0

},

"hasMap": "REPLACE_ME_WITH_MAP_URL",

"openingHoursSpecification": [

{

"@type": "OpeningHoursSpecification",

"dayOfWeek": [

"Monday",

"Tuesday",

"Wednesday",

"Thursday",

"Friday"

],

"opens": "09:00",

"closes": "17:00"

}

],

"sameAs": [

"REPLACE_ME_WITH_GOOGLE_PROFILE_URL",

"REPLACE_ME_WITH_APPLE_MAPS_URL",

"REPLACE_ME_WITH_BING_MAPS_URL",

"REPLACE_ME_WITH_YELP_URL"

]

}Operational rule: one truth, many surfaces

Once per-location schema exists, your workflow gets simpler:

- update hours/services/contact on the canonical location page

- propagate the same facts to profiles

- reduce conflicts → reduce hedging → increase “safe recommendation” likelihood