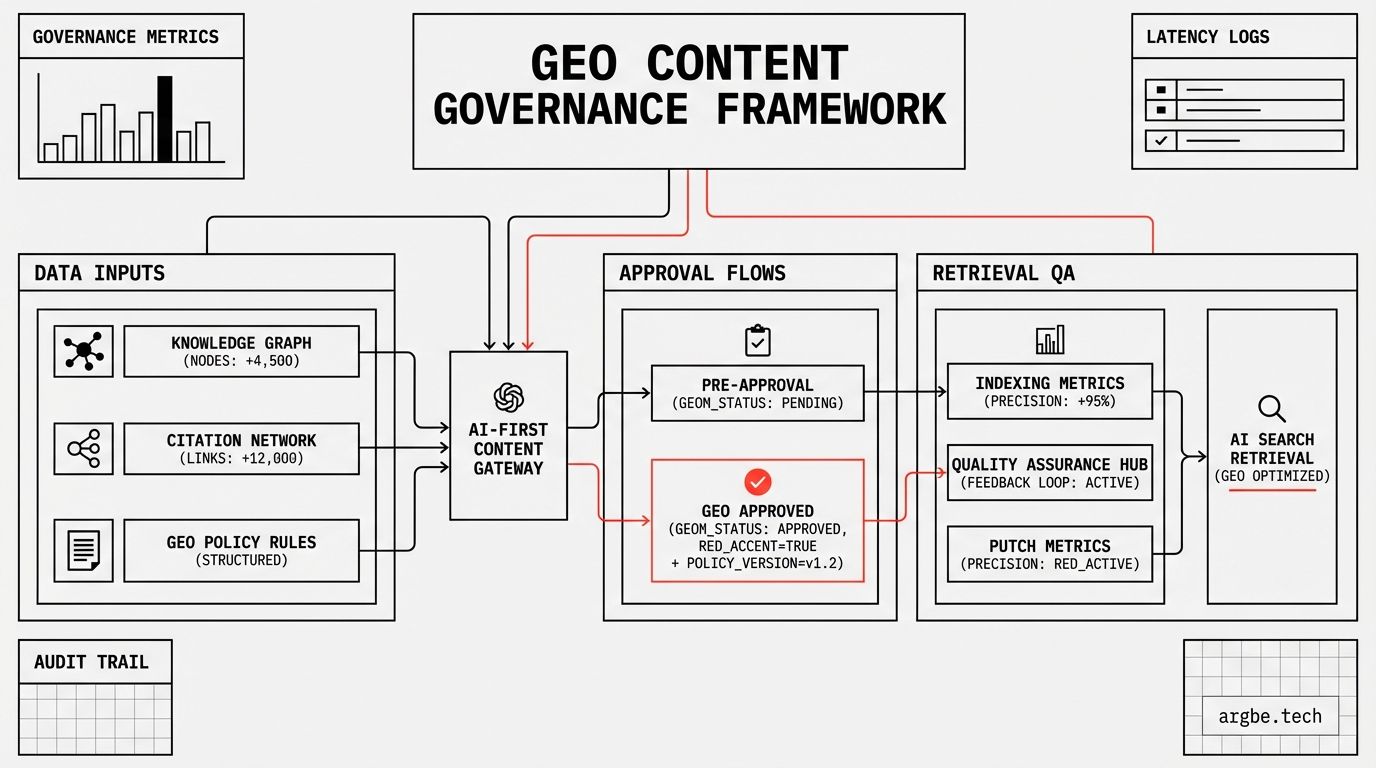

GEO Content Governance: Policies, Approval Flows, and Retrieval QA for AI-First Search

GEO Content Governance is how content leaders keep brand facts consistent across docs, marketing, and sales so AI answer engines retrieve one truth. This guide outlines the policies, approval flow, and retrieval QA needed to reduce misquotes at scale.

If you want the foundation first, start with What is Generative Engine Optimization (GEO)?. For the schema mechanics referenced in this guide, read Structured Data for LLMs. For the entity layer behind “truth consistency,” read Entity Density in SEO. For the business outcome, see AI Citation Strategy.

Curiosity gaps (click-through drivers)

- Most marketing teams unknowingly feed AI models contradictory pricing details—there’s a simple Golden Record fix, but it requires changing how you write “friendly” copy.

- In our audits, “zombie pages” (outdated but still indexable) are a common cause of brand misquotes in Gemini-style experiences. The solution is not deleting them; it’s archiving them with time-bounded signals.

- Retrieval QA adds one uncomfortable step to publishing: ask the AI about your product before you hit publish, then fix what it can’t extract.

SEO Governance vs. GEO Content Governance (AI-first)

| Governance Component | Traditional SEO Focus | GEO / AI Focus | Risk of Neglect |

|---|---|---|---|

| Source of truth | Page-by-page optimization | Central Golden Record for facts | Contradictions become “it depends” answers |

| Policy | Meta + keywords hygiene | Fact ownership + change control | Stale numbers drift across docs and decks |

| Review | Editorial + brand voice | Retrieval QA + extraction clarity | Key claims become non-retrievable |

| Technical | Crawl/index + templates | Schema.org validation + entity IDs | Parsers fail; engines guess |

| Lifecycle | Publish, then move on | Update/Archive with time bounds | Zombie pages outcompete the current truth |

The High Cost of Inconsistent Truth

AI systems don’t “believe” your site. They assign probabilities to competing claims, then answer with whatever is most defensible in context.

When two pages disagree on a hard fact, you create a low-confidence zone around that attribute. The model’s safest move is to hedge (“pricing varies”), omit the number entirely, or merge the facts into a new answer that neither page intended.

That’s the operational cost of inconsistency: you don’t just lose traffic—you lose message control.

Teams feel this first in pricing and packaging. One page says “$50 per user,” another says “$40 for early customers,” a third says “contact sales.” You didn’t “test positioning”; you contaminated the fact space.

We call this Entity Contamination: once conflicting facts exist across indexable URLs, the system can’t confidently assign a single value to the brand attribute—so it reduces specificity everywhere that attribute appears.

The result is measurable in language. When your claims are coherent, answers are crisp. When your claims conflict, answers start to include qualifiers, ranges, and vague caveats.

This is also why brand safety in AI isn’t a PR problem; it’s an engineering problem. The model’s job is to produce a plausible response. Your job is to remove ambiguity so the plausible response is also correct.

If you lead a mid-to-large content org, the failure mode is predictable:

- Documentation ships a precise truth.

- Marketing ships a simplified truth.

- Sales collateral ships a negotiated truth.

Now your brand has three “truths,” and the machine has to pick one. That’s the moment your Confidence Score drops and your narrative starts to drift.

Protocol 1: The Golden Record Strategy

A Golden Record is not a spreadsheet. It’s a product: versioned, addressable, and designed to be referenced by systems instead of rewritten by people.

The rule is simple: don’t hardcode facts in prose when the fact is supposed to be stable. Write the explanation around the fact, but source the value from the record.

This shifts governance from “policing writers” to “shipping truth once.”

In practice, your Golden Record is a JSON/YAML layer that powers the UI and feeds your content templates. That’s how you eliminate version conflicts across pages without asking creative teams to remember the current number.

Here’s what that looks like when the fact is pricing and SLA:

{

"golden_record": {

"pricing_model": "Fixed weekly rate",

"sla_first_response": {

"statement": "Typically within 24 hours",

"typical_hours": 24,

"source_url": "https://argbe.tech/contact"

}

},

"rendered_copy_example": {

"pricing": "Fixed weekly rate",

"sla": "[object Object]"

}

}If you want a concrete “fanout” pattern, make facts addressable by path and render them inline:

- Pricing model: Fixed weekly rate Fixed weekly rate

- First response: {"statement":"Typically within 24 hours","typical_hours":24,"source_url":"https://argbe.tech/contact"} Typically within 24 hours

That’s not a gimmick. It’s how you keep every page consistent when your content surface area grows.

The provocation: “creative” teams become liabilities when they improvise facts. Creativity is fine in framing; it’s dangerous in numbers, constraints, and definitions.

Your governance policy should reflect that reality:

- Fact fields (prices, limits, availability, SLAs) must be rendered from the record.

- Interpretation fields (positioning, examples, use cases) can be written freely—within approved boundaries.

- New facts require an explicit change request to the record, not a quiet tweak in a blog post.

This is where entity clarity becomes practical. When the same attribute shows up with the same value across URLs, Entity Salience stays stable during retrieval, and the system has fewer reasons to guess.

Protocol 2: Retrieval QA (Pre-Publish)

Retrieval QA is a pre-publish pass that asks: “If an answer engine pulls only this page, will it extract the right claim without improvising?”

This is different from proofreading. You’re not checking grammar—you’re checking extractability.

Schema.org gives the Knowledge Graph a clean parse path for what the page is and which claims are in-bounds.

In Content Operations terms, this is just a release gate: if markup and visible text disagree, the build fails.

The fastest way to do it is to run two checks that mimic how modern pipelines behave:

- Parser check: validate your Schema.org and make sure your tables and definitions are easy to lift.

- Reasoner check: paste the section into an LLM and ask it to extract your key facts as a list.

If the model can’t extract it, don’t assume Google will treat it as a stable fact.

This matters even more if your product ships a support assistant that uses Retrieval-Augmented Generation (RAG). In that world, your own content becomes the model’s knowledge base—so contradictions don’t just harm marketing; they harm product UX.

The Retrieval QA Checklist (SOP)

| Phase | Check Item | Validation Tool |

|---|---|---|

| Pre-write | Confirm which facts must come from the Golden Record | Golden Record diff + owner sign-off |

| Draft | Ensure the definition is one-paragraph extractable | Manual read + “copy/paste test” |

| Markup | Validate Schema.org matches visible claims | Schema Validator + build-time lint |

| Extraction | Ask an LLM: “Extract pricing + constraints as JSON” | Claude/ChatGPT prompt test |

| Conflict | Search site for competing values (old numbers, old limits) | Repo search + site: query |

| Pre-publish | Run “answer preview”: ask the AI, then compare | Internal prompt set + rubric |

HowTo: run Retrieval QA in one pass

- Paste your Direct Answer definition and the “facts” section into your validator prompt.

- Ask for extraction: “Return pricing, constraints, and SLA as JSON.”

- Compare output to the Golden Record; fix mismatches in the record or the page.

- Validate schema for the page type and ensure it matches visible text.

- Re-run extraction until the output is stable and specific (no hedging).

The operational win is speed. When Retrieval QA is a gate, it prevents the slowest kind of work: emergency cleanups after an AI answer misrepresents you in public.

Protocol 3: Managing ‘Zombie’ Content

Zombie content is any page that is still retrievable but no longer true.

You can’t solve that with “be careful.” You solve it with a lifecycle policy that treats outdated truth as an incident, not a quirk.

The policy is binary:

- Update: keep the URL, refresh the facts, and re-run Retrieval QA.

- Archive: keep the URL for historical value, but add time-bounded signals so the old truth stops competing with the new one.

Schema can help you archive without deleting. For time-sensitive claims, use properties like validThrough to communicate that a claim expires, then pair it with visible on-page dates so humans and parsers agree. 3

This is how you avoid the worst failure mode: an old blog post out-ranking your current pricing page for the query that the assistant decides to cite.

In Content Operations terms, zombie management is just change control applied to URLs. The only “new” part is accepting that AI retrieval makes forgotten pages dangerous again.

Approval flow (the minimum you need)

If governance feels like bureaucracy, it’s usually because the flow isn’t tied to a machine-checkable outcome.

Here’s the minimum flow we’ve seen work in large teams:

- Policy: define which fields are “facts” vs “interpretation.”

- Ownership: assign a fact owner (one person/team) for each fact domain.

- Gate: require Retrieval QA for any page that introduces or modifies a fact.

- Monitor: sample AI answers monthly for drift, then back-propagate fixes to the record.

If you want Argbe.tech to implement this as a deployable system

We package GEO governance as a fixed-scope build: Golden Record setup, Retrieval QA gates, and content lifecycle rules—then a handoff your team can run. Pricing model: Fixed weekly rate. Fixed weekly rate