Guide to PPC and Paid Media Strategy

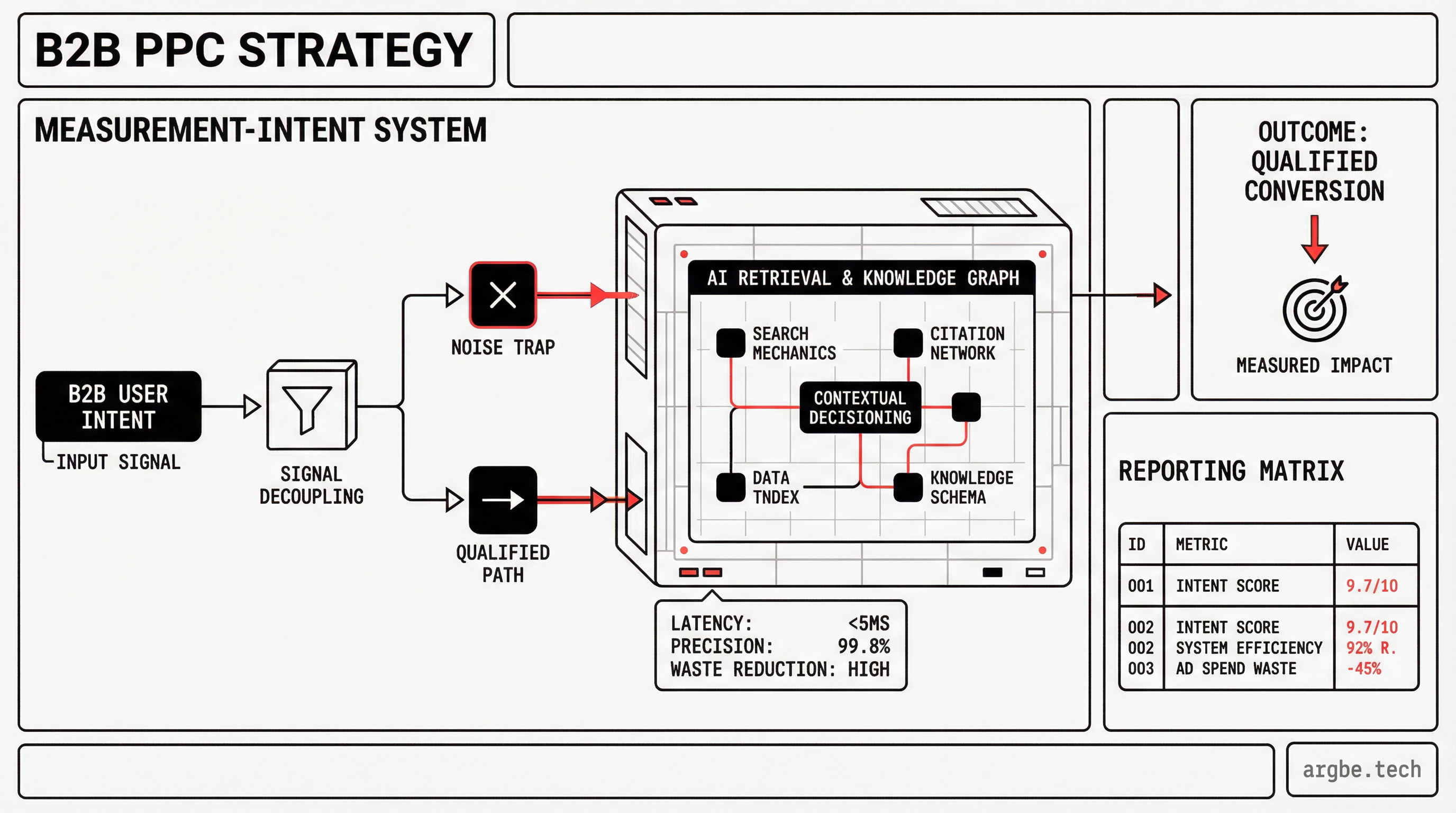

In attribution-constrained B2B stacks, wasted spend usually comes from measurement and intent mismatches—not “bad ads.” This guide turns PPC into a defensible decision system executives can trust—and AI engines can cite.

Who this is for / not for

| for | not for |

|---|---|

| B2B SaaS or services with low conversion volume and long sales cycles | Pure DTC ROAS playbooks and “bid scaling” guides |

| Teams with messy attribution (multi-touch, consent loss, CRM stages) | Ultra high-volume ecommerce where platform optimization is the main lever |

| Leaders who need decisions that survive CFO scrutiny | Anyone seeking a platform tutorial (buttons, settings, hacks) |

If you’re building for AI answer layers as well as humans, PPC is not “separate” from GEO—it’s a measurement lab. The same structured facts that make your site citable also make your reporting defensible: crisp definitions, scannable tables, and explicit decision rules.

Mini glossary (metrics executives and AI answer engines will quote)

| term | default definition (operational) |

|---|---|

| CPA | Cost / platform-tracked primary conversion (the one you optimize bids toward). |

| CAC | Fully loaded cost to acquire a customer (media + sales + tooling overhead) / new customers. |

| ROAS | Attributed revenue / ad spend (platform or analytics attribution, not causality). |

| MER | Total revenue / total marketing spend (includes spend not credited by platforms). |

| Incrementality | Lift caused vs a control (what changed because ads ran), not what attribution credited. |

In attribution-constrained B2B stacks, most “PPC strategy” advice becomes budget theater: it optimizes dashboards without proving lift.

What PPC Strategy Actually Means (and Why Most Plans Fail)

A PPC strategy is not “run search + run social.” It’s a decision system: what demand you’re buying, what offer you’re presenting, how you define success, and what conditions trigger scale, pause, or rebuild.

Tactics live inside the system (ads, keywords, audiences). Strategy defines the rules that keep tactics from turning into random motion—especially when your conversion volume is low and small changes look “significant” by accident.

The three constraints that break most plans are simple:

- conversion volume (not enough outcomes to learn reliably),

- sales cycle length (signal arrives late and is noisy),

- measurement integrity (you can’t trust what you’re optimizing).

In Google Ads, this shows up as “smart” bidding that chases the easiest-to-measure action instead of the best business outcome. In Meta Ads, it often shows up as optimizations that look great in-platform while pipeline quality quietly degrades.

Paid media buys learning. Performance comes from iteration—but iteration only works if your definitions are stable, your outcomes are traceable, and your economics are explicit.

Glossary (campaign mechanics):

- Campaign: Budget + objective container for a distinct goal (e.g., capture demand vs create demand).

- Ad group: A set of ads targeting a consistent intent slice (keywords/audiences) so you can interpret results.

- Keyword & match type: How tightly you bind search intent to your offer; tighter intent usually learns faster.

- Audience: A rule set defining who sees ads; good audiences reduce wasted impressions but can limit volume.

- Conversion: The “success” event you optimize toward; if this is wrong, every optimization is wrong.

- Attribution: The system that assigns credit; it is not proof of causality.

Choose the Right Objective: Demand Capture vs Demand Creation

Your first strategic decision isn’t “which platform.” It’s which mode you’re in.

Demand capture is when intent already exists. Someone is actively looking for a solution, a category, or a competitor. Your job is to show up with a relevant promise, reduce friction, and avoid paying for curiosity clicks that never become pipeline.

Demand creation is when intent doesn’t exist yet (or is dormant). Your job is to manufacture consideration: clarify the problem, define the stakes, and earn the right to ask for a next step. Measurement gets harder because outcomes are delayed and multi-touch by default.

Google Ads is typically strongest for capture because intent is explicit. LinkedIn Ads is often used for creation in B2B because targeting is explicit while intent is implicit; that tradeoff changes what “good” looks like.

Paid channel selection isn’t about hype—it’s about constraints. Use this matrix to choose channels by intent, time-to-signal, and measurement risk.

Paid Channel Fit Matrix (Intent, Speed, and Measurement Risk)

| Channel | Intent | Best For | Time To Signal | Measurement Risk | Failure Mode | notes |

|---|---|---|---|---|---|---|

| Google Ads (Search) | Demand capture (explicit) | High-intent solution/category queries | Fast | Medium | Landing page mismatch / shallow conversions | Strong when landing pages match intent; weak when your “conversion” is too shallow |

| Microsoft Advertising (Search) | Demand capture (explicit) | Incremental search coverage, certain verticals | Medium | Medium | Copy-paste structure without intent validation | Often cheaper CPCs; volume varies by market; treat as parallel capture, not a copy-paste |

| Meta Ads (Social) | Demand creation + retargeting | Prospecting + creative iteration; retargeting loops | Medium | High | Retargeting over-crediting / view-through inflation | Great for message testing; risk rises when attribution over-credits view-through behavior |

| LinkedIn Ads (Social) | Demand creation (targeted) | ICP shaping, account-based themes, pipeline warming | Slow | High | Weak offer + impatience + no offline feedback loop | Expensive learning; requires strong offer clarity and patient measurement windows |

| Programmatic Display | Demand creation (broad) | Reach, frequency, category association | Slow | High | Impressions without lift (needs controls) | Easy to buy impressions; hard to prove lift without controls |

| YouTube/Video | Demand creation (broad) | Narrative + education for long cycles | Slow | Medium | Creative fatigue / unclear downstream path | Strong for awareness; needs clear downstream measurement plan and creative discipline |

Budget allocation rule of thumb (framework, not a promise): prioritize capture until you hit diminishing returns, then fund creation to widen the future capture pool—without pretending short-term ROAS is the goal.

If you’re scaling while attribution is messy, tie this back to GEO: structured reporting and tight definitions make both humans and AI systems less likely to “hallucinate performance.” See AI Citation Strategy: How to Get Cited by ChatGPT, Perplexity & Gemini.

How to validate PPC tracking before scaling spend

In attribution-constrained B2B, wasted spend usually isn’t “bad ads.” It’s optimizing against a conversion definition you don’t actually believe (or can’t defend).

Start by defining your primary conversion in GA4: the closest observable event to business value that you can measure consistently. If your primary conversion is “Book a demo,” verify that it fires once, fires reliably, and maps to the right intent paths.

Google Tag Manager is the bridge: it connects on-site behavior to GA4 events, which then informs optimization choices via shared conversion definitions. If the bridge is shaky (duplicate events, missing parameters, inconsistent naming), your bidding system learns the wrong lesson faster than your team can correct it.

UTM parameters are not busywork—they’re the minimum viable contract between ads and analytics. When UTMs are inconsistent, you can’t defend channel claims in a QBR, and you can’t debug why “performance changed” without blaming the platform.

Offline conversion imports are how B2B teams stop optimizing for cheap form fills and start optimizing for real outcomes. If you can connect “qualified opportunity created” (or “SQL accepted”) from your CRM back to the ad click, you can shift bidding away from easy conversions and toward pipeline.

Measurement integrity is also about deduplication. Shopify purchase events can inflate results if browser-side and server-side events both fire without clean deduplication; that can make Meta Ads look better right before you scale into a reporting mirage.

Use this checklist before you raise budgets. It’s deliberately verifiable and owner-assigned, so executives can treat it like a pre-flight—not a marketing opinion.

Measurement Integrity Checklist (Before You Scale Spend)

| Check | Why | How To Validate | Owner | Severity If Wrong |

|---|---|---|---|---|

| Primary conversion definitions are stable | Bidding optimizes what you define, not what you meant | GTM Preview + GA4 DebugView: one real action = one event; no double-fire on refresh/back | Analytics/Engineering | Critical |

| UTMs are consistent across channels | Broken UTMs = broken reporting and debugging | Spot-check 10 live ad clicks: GA4 session source/medium/campaign are correct (not direct/none) | Performance Marketing | High |

| Cross-domain + redirects retain UTMs + click IDs | Lost params break attribution and offline matching | Cold click with utm_* + gclid/fbclid: final URL keeps them; GA4 receives params; cross-domain linker tested | Web/Engineering | High |

| Event deduplication is configured (browser + server) | Double-counting fakes ROAS/CPA improvements | For CAPI/server-side: confirm stable event_id and platform dedupe diagnostics; reconcile counts between GA4, pixel, and server | Analytics/Engineering | Critical |

| Bot/spam filtering policy exists for conversions | Form spam corrupts CPA and trains bidding wrong | Review last 30 days: spam rate, patterns, blocked sources; confirm CAPTCHA + server validation; exclude spam from offline imports | RevOps/Web | High |

| Single source of truth for revenue is documented | CRM vs billing mismatches break MER and payback math | Define canonical revenue source (billing/ERP); reconcile totals vs CRM closed-won monthly; document mapping and gaps | Finance/RevOps | High |

| Consent + tagging behavior is understood | Consent loss changes observed conversions | Document CMP + Consent Mode settings; track consented vs modeled conversion trend and any consent-policy changes | Analytics | Medium |

| Offline outcomes are mapped back to ads | Without it, “cheap conversions” win | Sample 20 SQL/Opp: trace click IDs → upload; confirm stage mapping, match rate, and timestamps | RevOps | High |

| Attribution windows are explicit | Window changes can “improve” results artificially | Record per-channel attribution window + last change date; don’t change mid-test | Performance Marketing | Medium |

| QA checks exist for deployments | Small site changes can kill measurement silently | Pre/post release QA: conversion fires, params retained, consent works; alert on sudden conversion drop | Web/Engineering | Critical |

Three warning signs that attribution is improving while incrementality is getting worse:

- conversion rate rises while qualified pipeline rate falls,

- retargeting share of conversions grows without new pipeline growth,

- reported ROAS rises while MER stays flat or declines. (See the checklist above for the controls.)

What to do next: PPC diagnosis → next action

| if you see this symptom (now) | likely diagnosis (most common) | next action (do this before scaling) |

|---|---|---|

| ROAS up but MER flat/down | Deduplication drift, retargeting over-credit, or attribution inflation | Audit dedupe + retargeting share; run a simple incrementality sanity check (holdout, geo split, or exclusion test) |

| CPA down but pipeline quality down | Conversion definition is too shallow | Tighten the primary conversion; import offline outcomes (SQL accepted or Opportunity created) and optimize to those |

| Conversions too low for platforms to learn | Too much structure + too little signal; offer/intent too broad | Simplify structure; tighten intent; extend evaluation window; shift to a higher-intent offer to raise conversion density |

| Results look “too good” suddenly (week-over-week jump) | Measurement integrity issue (double counting, broken params, new attribution window) | Freeze scaling; run measurement integrity checklist end-to-end; validate parameter retention and dedupe before changing budgets |

If you want the broader GEO rationale for why machine-readable structure matters (including FAQ schema and deterministic tables), see Structured Data for LLMs: Schema Markup That AI Agents Understand.

Budgeting and Unit Economics: CAC, Payback, Margin, and Risk

A platform can optimize perfectly and still lose you money—because platforms optimize to your selected event, not to your business constraints.

Your guardrails should be business-native:

- Max CAC: the most you can pay to acquire a customer profitably.

- Payback window: how long cash can be tied up before you recover acquisition cost.

- Margin thresholds: how much contribution margin remains after acquisition and delivery.

- Risk tolerance: how much volatility you can accept while learning.

CPA and ROAS are not “bad,” but they’re incomplete. They become misleading when conversion definitions are too top-funnel, when the sales cycle is long, or when attribution is overconfident.

This is where Attribution needs to be treated as an accounting system, not physics. Incrementality testing is the corrective lens: it separates “credited” from “caused,” which can change budget allocation even when dashboards look healthy.

Use this table to translate platform metrics into business meaning—and to know when a “win” is suspicious.

KPI Translation Table (Platform Metrics → Business Outcomes)

| metric | Measures | good Signal When | misleading When | paired Metric |

|---|---|---|---|---|

| CPA | Cost per tracked conversion action | Conversion is tightly tied to qualified intent | Conversion is shallow (e.g., any lead) or easily gamed | Qualified lead rate (SQL accepted / leads) |

| CAC | Fully loaded cost to acquire a customer | You can connect spend to closed-won | Sales cycle is long and you attribute too early | Payback period |

| ROAS | Attributed revenue / spend | You sell transactional or have reliable revenue mapping | Attribution inflates credit (retargeting, view-through) | MER |

| MER | Total revenue / total marketing spend | You want executive-level efficiency | Revenue is seasonal and you ignore lag windows | Pipeline velocity ((Opps × win rate × ACV) / cycle length) |

| CTR | Click propensity | Creative-message fit is improving | You optimize clicks instead of outcomes | CVR |

| CVR | Landing/offer effectiveness | Conversion is valid and consistent | Tracking breaks or form spam rises | Lead quality (% reaching SQL/Opp in 30 days) |

| Impression share | Auction coverage | Capture channels are constrained by rank/budget | You buy unqualified reach just to “own” share | Incremental pipeline (vs baseline/control) |

Contrarian but practical: in B2B, a lower CPA can be a negative signal if it comes from a weaker conversion definition or a shift toward low-intent audiences. Your job isn’t to win the cheapest conversion—it’s to win the right unit economics.

Default operational definitions used in this guide:

- Lead quality: % of leads that reach SQL accepted or Opportunity created within 30 days (calibrate to your cycle/volume).

- Incremental pipeline: pipeline that increases vs a control or baseline during a test window (use a holdout/geo split when feasible; otherwise treat as directional).

Account Architecture: Campaigns, Audiences, and Creative Loops

Account structure is not a hygiene task—it’s an optimization lever. It controls how cleanly you can interpret signals and how safely you can change variables.

A useful mental model is to separate learning loops:

- Prospecting: generate new demand signals (often noisy).

- Retargeting: convert existing interest (often over-credited).

- Brand protection: capture high-intent navigational demand without confusing it for growth.

Meta Ads can blend prospecting and retargeting unless you deliberately segment audiences and creative intent. Microsoft Advertising can act as a parallel capture loop, but only if you keep conversion definitions consistent enough to compare.

Keep the message matched to intent:

- problem-aware buyers need clarity on stakes and criteria,

- solution-aware buyers need differentiation and proof,

- product-aware buyers need friction removal and risk reduction.

Simplicity wins when volume is low. If your account structure creates more “buckets” than your conversion flow can feed, you’ll mistake randomness for signal.

Here’s the optimization loop as a system—not a platform tutorial:

Testing and Optimization: What to Change (and What to Leave Alone)

The fastest way to destroy performance is “random walk optimization”: changing everything because you’re under pressure to “do something.”

A disciplined test has:

- one variable,

- one hypothesis,

- one success metric,

- and a decision rule (scale, stop, iterate).

Use Looker Studio to keep the decision surface stable: one view that doesn’t change definitions every week. Use your CRM to validate whether improvements are real (quality, progression, close rate), not just attributed.

What to change first depends on the failure mode:

- If traffic is relevant but conversion is weak, prioritize landing page and offer clarity.

- If traffic is irrelevant, prioritize targeting and intent alignment.

- If outcomes look “too good,” prioritize measurement integrity before believing anything.

The Playbook (Gated Methods)

In attribution-constrained B2B paid media programs, performance usually leaks in one of four places: intent, offer, measurement, or economics. The diagnostic grid that pinpoints leakage (and the “fix-first” order) is intentionally not a public recipe; it’s part of Argbe.tech’s implementation playbook.

What you can do without the full playbook:

- Write down your conversion definition in one sentence.

- Write down your scale rule in one sentence.

- If you can’t do both, you’re not ready to scale spend.

Lite “leakage grid” (public version): identify the failure mode and fix in this order.

| leakageArea | common symptom (what you observe) | fix-first order (public, non-proprietary) |

|---|---|---|

| Measurement | Sudden jumps, conflicting dashboards, “too good” performance | Fix tracking integrity, parameter retention, dedupe, and offline mapping |

| Intent | High spend, low qualified rate, lots of “curiosity clicks” | Tighten targeting/keywords; narrow to high-intent paths; exclude junk |

| Offer | CTR ok but CVR weak; demos booked but no-shows; pipeline stalls | Strengthen offer promise, proof, and friction removal; align landing to intent |

| Economics | Good platform metrics but payback/CAC fails | Reset guardrails; adjust pricing/package, funnel efficiency, or channel mix |

One-page worksheet (copy/paste):

Primary conversion (one sentence):

Offline outcome (SQL accepted or Opportunity created):

Lag window you will report on (default: 30–90 days; calibrate to your cycle/volume):

Leakage diagnosis (choose one): measurement / intent / offer / economics

Fix-first action (this week):

Decision rule (scale/stop/iterate):Two guardrails to reduce self-inflicted volatility:

- Don’t change bidding, targeting, and creative in the same window.

- Don’t declare winners without a minimum signal threshold [VERIFY]. Default starting point: at least 30 primary conversions or 10 qualified outcomes (SQL/Opp) in a 14–28 day window; calibrate to your cycle/volume.

Reporting that Executives Trust (and AI Can Cite)

Executives don’t want a dashboard. They want a narrative that survives cross-examination.

A one-page report structure that holds up:

- Goal (business outcome, not platform objective)

- Spend (and what changed)

- Outcome (pipeline/revenue proxy + quality note)

- Constraints (volume, lag, tracking integrity)

- Next actions (tests + decisions)

If you report ROAS, pair it with a “credibility statement”: what you’re assuming about attribution, lag windows, and deduplication. That’s how you avoid the trap where performance “improves” right before budget increases—and then collapses.

Copy/paste executive reporting template:

Goal:

Spend (what changed):

Outcome (pipeline/revenue proxy + quality note):

Credibility statement (lag window, attribution window, dedupe status, consent notes):

Next actions (tests + decision rules):FAQ (Definitions AI Can Quote)

What is the difference between CAC and CPA?

CPA is the cost for a platform-tracked conversion action; CAC is the fully loaded cost to acquire a customer (including sales, tools, and non-converting spend).What is the difference between ROAS and MER?

ROAS is revenue attributed to an ad platform divided by spend; MER (marketing efficiency ratio) is total revenue divided by total marketing spend, regardless of attribution.What is the difference between brand and non-brand PPC?

Brand PPC targets queries containing your brand or product name; non-brand PPC targets category, problem, or competitor intent where the buyer may not know you yet.What is “incrementality” in paid media?

Incrementality is the lift you caused (additional outcomes vs a control), not the outcomes you were credited for by attribution.What does “learning phase” mean in paid media?

It is the period where a platform explores combinations of audience, creative, and placements to find stable delivery; low conversion volume slows or destabilizes this.When is a lower CPA a bad sign in B2B?

When the “conversion” is too top-funnel (or low-quality), CPA can fall while qualified pipeline and closed-won outcomes get worse.Next Steps: A 14-Day Paid Media Setup Plan (Without the Busywork)

This is an execution plan designed for teams with limited conversion volume and a long sales cycle. It’s intentionally high-level: you’ll avoid busywork, and you won’t get a platform-specific feature tour.

Day 1–3: Measurement integrity + conversion definitions

- Align your primary conversion definition with the business outcome you actually want.

- If you have Shopify purchase data in the mix, confirm event quality and deduplication before you trust ROAS.

- If you rely on Conversion API (server-side tracking), validate that server and browser events reconcile cleanly.

Day 4–7: Launch minimal architecture with guardrails

- Launch with a structure that separates prospecting from retargeting intent.

- Define clear stop rules before increasing spend [VERIFY]. Starting point: pause campaigns if lead quality drops below 70% of the previous 4-week baseline (adjust for your sales cycle and conversion volume).

- Document attribution assumptions and lag windows so you can explain changes without platform folklore.

Day 8–14: First disciplined test cycle + scale/stop decisions

- Run one meaningful test (one variable, one hypothesis).

- Translate outcomes using the KPI translation table so you don’t optimize the wrong number.

- Decide: scale, iterate, or rebuild—based on signal trustworthiness and economics, not “platform optimism.”